Mathematics of LLMs in Everyday Language

Turing

@turingappAbout

Explore Science like never before—accessible, thrilling, and packed with awe-inspiring moments. Join us on an adventure to fuel your curiosity with 100s of curated audio shows. Download Turing from the iOS App store or Google Play for free or listen at our website

Latest Posts

Video Description

Explore science like never before - accessible, thrilling, and packed with awe-inspiring moments. Fuel your curiosity with 100s of free, curated STEM audio shows . Download The Turing App on the Apple App Store, Google Play Store or listen at https://theturingapp.com/ Foundations of Thought: Inside the Mathematics of Large Language Models ⏱️Timestamps⏱️ 00:00 Start 03:11 Claude Shannon and Information theory 03:59 ELIZA and LLM Precursors (e.g., AutoComplete) 05:43 Probability and N-Grams 09:45 Tokenization 12:34 Embeddings 16:20 Transformers 20:21 Positional Encoding 22:36 Learning Through Error 26:29 Entropy - Balancing Randomness and Determinism 29:36 Scaling 32:45 Preventing Overfitting 36:24 Memory and Context Window 40:02 Multi-Modality 48:14 Fine Tuning 52:05 Reinforcement Learning 55:28 Meta-Learning and Few-Shot Capabilities 59:08 Interpretability and Explainability 1:02:14 Future of LLMs What if a machine could learn every word ever written—and then begin to predict, complete, and even create language that feels distinctly human? This is a cinematic deep dive into the mathematics, mechanics, and meaning behind today’s most powerful artificial intelligence systems: large language models (LLMs). From the origins of probability theory and early statistical models to the transformers that now power tools like ChatGPT and Claude, this documentary explores how machines have come to understand and generate language with astonishing fluency. This video unpacks how LLMs evolved from basic autocomplete functions to systems capable of writing essays, generating code, composing poetry, and holding coherent conversations. We begin with the foundational concepts of prediction and probability, tracing back to Claude Shannon’s information theory and the early era of n-gram models. These early techniques were limited by context—but they laid the groundwork for embedding words in mathematical space, giving rise to meaning in numbers. The transformer architecture changed everything. Introduced in 2017, it enabled models to analyze language in full context using self-attention and positional encoding, revolutionizing machine understanding of sequence and relationships. As these models scaled to billions and even trillions of parameters, they began to show emergent capabilities—skills not directly programmed but arising from the sheer scale of training. The video also covers critical innovations like gradient descent, backpropagation, and regularization techniques that allow these systems to learn efficiently. It explores how models balance creativity and coherence using entropy and temperature, and how memory and few-shot learning enable adaptability across tasks with minimal input. Beyond the algorithms, we examine how we align AI with human values through reinforcement learning from human feedback (RLHF), and the role of interpretability in building trust. Multimodality adds another layer, as models increasingly combine text, images, audio, and video into unified systems capable of reasoning across sensory inputs. With advancements in fine-tuning, transfer learning, and ethical safeguards, LLMs are evolving into flexible tools with the power to transform everything from medicine to education. If you’ve ever wondered how AI really works, or what it means for our future, this is your invitation to understand the systems already changing the world. #largelanguagemodels #tokenization #embeddings #TransformerArchitecture #AttentionMechanism #SelfAttention #PositionalEncoding #gradientdescent #explainableai

You May Also Like

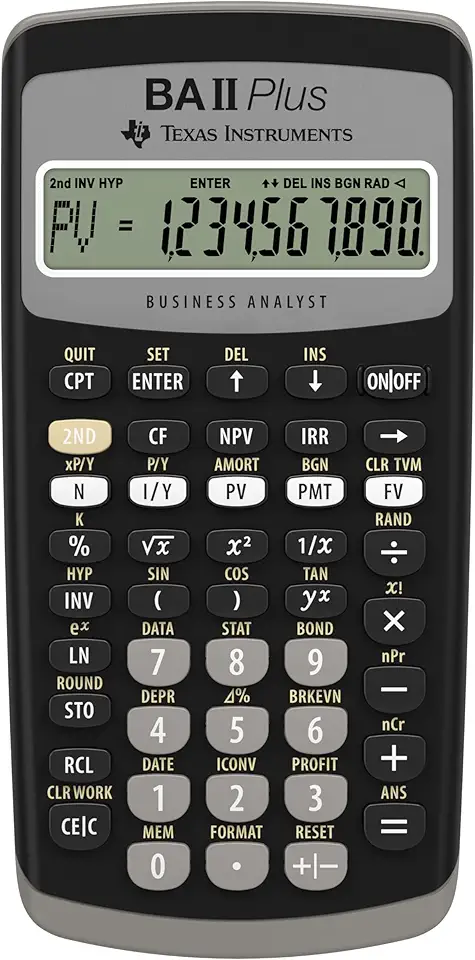

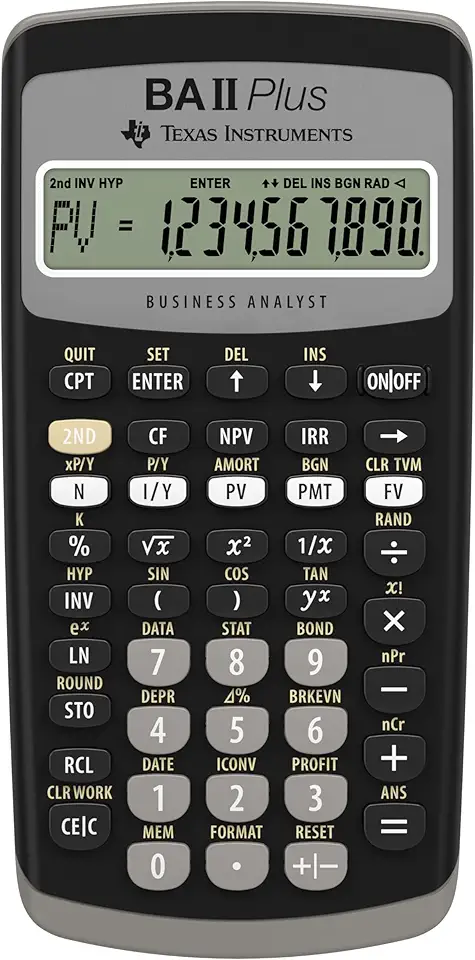

Essential Calculators for LLM Enthusiasts

AI-recommended products based on this video

Texas Instruments BA II Plus Financial Calculator, Black

Texas Instruments BA II Plus Financial Calculator, Black

Charger for Dell Inspiron 15 Charger, 65W Dell Inspiron 15 14 13 3000 5000 7000 Series, Dell Latitude 13 3301 3390 14 3400 3410 3420 3490 15 3500 3510 3520 3590, Dell Vostro, 4.5 * 3.0mm Power Cord

45W 65W AC Adapter Laptop Charger for Dell Inspiron 15 14 13 17 3000 5000 7000 Series 5558 5559 Charger Latitude E6440 E6430 3520 3420 XPS 13 14 9333 9350 Notebook Dell Computer Power Supply Cord

65W 45W AC Adpter for Dell Laptop Charer fit for Dell Inspiron 13 14 15 17 3000 5000 7000 Series 3520 3558 5558 3583 3511 5570 XPS 9333 9350 Latitude 3310 Vostro 3425 3520 Computer Round Tips Charger

90W AC Power Adapter for Dell OptiPlex 3000 5000 7000 9020 Series Micro Desktop Inspiron 20" 22" 24" 27" AIO Desktop, Inspiron 15 16 17 14 13 3000 5000 7000 Series Laptop Charger

Apple 2025 MacBook Air 13-inch Laptop with M4 chip: Built for Apple Intelligence, 16GB Unified Memory, 256GB SSD Storage, Touch ID; Sky Blue - English Keyboard

![TP-Link USB C To Ethernet Adapter (UE300C) - J45 To USB C [Thunderbolt 3/4 Compatible] Type-C Gigabit Ethernet LAN Network Adapter, Compatible With Apple MacBook Pro 2017-2023, MacBook Air, And More](https://m.media-amazon.com/images/I/418xZkoG-RL._AC_UY654_FMwebp_QL65_.jpg)