Stanford CME295 Transformers & LLMs | Autumn 2025 | Lecture 1 - Transformer

About

No channel description available.

Video Description

For more information about Stanford’s graduate programs, visit: https://online.stanford.edu/graduate-education September 26, 2025 This lecture covers: • Background on NLP and tasks • Tokenization • Embeddings • Word2vec, RNN, LSTM • Attention mechanism • Transformer architecture To follow along with the course schedule and syllabus, visit: https://cme295.stanford.edu/syllabus/ Chapters: 00:00:00 Introduction 00:03:54 Class logistics 00:09:40 NLP overview 00:22:57 Tokenization 00:30:28 Word representation 00:53:23 Recurrent neural networks 01:06:47 Self-attention mechanism 01:13:53 Transformer architecture 01:29:53 Detailed example Afshine Amidi is an Adjunct Lecturer at Stanford University. Shervine Amidi is an Adjunct Lecturer at Stanford University. View the course playlist: https://www.youtube.com/playlist?list=PLoROMvodv4rOCXd21gf0CF4xr35yINeOy

Master Transformers Today

AI-recommended products based on this video

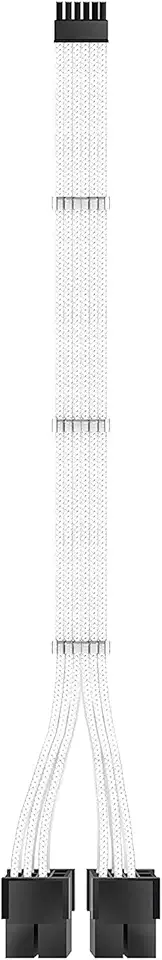

EZDIY-FAB RTX 3000 Series 12 Pin to Dual 8 Pin PCIe Sleeved Extension Cable 300 MM- Connector for NVIDIA Ampere GEFORCE RTX 3060ti 3070 3080 FE Funder Edition- White

Google Pixel Buds Pro 2 - Noise Canceling Earbuds - Up to 31 Hour Battery Life with Charging Case - Bluetooth Headphones - Compatible with Android - Hazel

Deeyaple USB C to Aux, 4FT/1.2M, Type C to 3.5mm Audio Cable Headphone Jack Cable for Car Mobile Phone, iPhone 16 15, iPad Pro, Samsung Galaxy S24 S23 S2010, Google Pixel,Oneplus Grey (1)

Car Carplay Woven Cable for iPhone 16 15 3.3FT USB A to USB C 3.2 Gen 2 Carplay Adapter Wire for iPhone 16 15 Pro Max, iPad Pro/Air, Samsung Galaxy S25/S24/S23/S22/S21 Google Pixel, Car Charger Cable